That Username Is Taken. How Does Google Know Instantly?

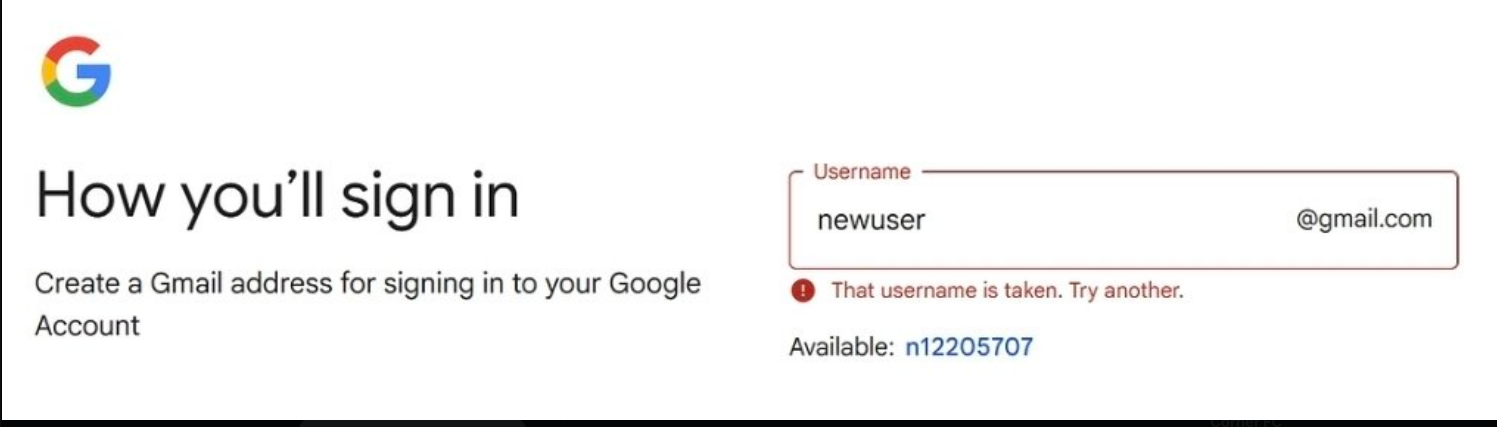

You type “newuser” into the Gmail signup screen. Already taken. The UI suggests “n12205707” before you have even stopped typing. No spinner. No network flicker. Just instant.

You are not doing an Elasticsearch query on every keypress. That would be a crime.

So how does it work? I want to walk through what I think is the right solution — the math behind it, and why most engineers reach for the wrong tool first.

Two Problems, Not One#

Most engineers ask: “How do I check if a username is available fast enough to feel instant?”

That is the wrong question. It conflates two problems with opposite requirements.

Problem 1: UX feedback. The user is typing. They need a signal — “this looks taken” or “this looks free” — without waiting 200ms for a server round trip. This needs to be fast. It does not need to be correct.

Problem 2: Uniqueness guarantee. When the user submits, the system must guarantee the username is not taken by anyone else. This must be correct. Speed matters less.

Solving both with the same mechanism is where engineers go wrong. You need two systems with a clear handoff between them.

The Classy Approach: In-Memory Trie#

My preferred approach for the hint layer: keep an in-memory trie of all registered usernames, update it asynchronously via delta pushes, and let the UI check locally in O(k) — where k is the length of the username being typed.

No network call. No latency. The check happens in memory on the pod handling the request.

The natural objection is memory. Let us do the math.

Worst-case memory estimate:

- Assume 2 billion registered usernames

- Average length: 10 characters

- Raw storage if you kept them as strings: 2B × 10 = 20 billion characters

- At 1 byte per character: ~20GB just for the raw strings

A trie is not storing raw strings. It is about prefix sharing. Common prefixes — “john”, “mike”, “alex” — collapse into shared nodes. Real usernames are not random; they cluster heavily around common names, numbers, and patterns. Prefix sharing cuts the real memory by a large multiple.

Modelling the node cost:

- Worst-case: ~1 node per character = ~20 billion nodes

- With tight-packed arrays, bitsets, and offset indices instead of raw pointers: ~8 bytes per node

- Worst-case total: 20B × 8 = 160GB

That sounds large. But now shard by the first two characters.

Sharding:

- Characters are

a–zand0–9: 36 options - First two characters: 36² = 1,296 shards

- 160GB / 1,296 ≈ ~123MB per shard

123MB fits comfortably in memory on any front-end pod or edge POP. You assign shards to pods, each pod owns its slice of the trie, and the UI routes the check to the right pod based on the first two characters of the username.

Suddenly “instant check” is just a local memory read.

type TrieNode struct {

children [36]*TrieNode // a-z (0-25), 0-9 (26-35)

isEnd bool

}

type Trie struct {

root *TrieNode

mu sync.RWMutex

}

func charIndex(c byte) int {

if c >= 'a' && c <= 'z' {

return int(c - 'a')

}

return int(c-'0') + 26

}

// IsTaken checks if a username exists in O(k).

func (t *Trie) IsTaken(username string) bool {

t.mu.RLock()

defer t.mu.RUnlock()

node := t.root

for i := 0; i < len(username); i++ {

idx := charIndex(username[i])

if node.children[idx] == nil {

return false

}

node = node.children[idx]

}

return node.isEnd

}

// Add inserts a username — called on delta push from the reservation stream.

func (t *Trie) Add(username string) {

t.mu.Lock()

defer t.mu.Unlock()

node := t.root

for i := 0; i < len(username); i++ {

idx := charIndex(username[i])

if node.children[idx] == nil {

node.children[idx] = &TrieNode{}

}

node = node.children[idx]

}

node.isEnd = true

}

The trie is updated asynchronously — delta pushes from a Kafka topic or Redis pub/sub stream whenever a username is reserved. Individual pods do not need to be perfectly in sync; a few seconds of lag is acceptable for a UI hint.

Where the Trie Does Double Duty: Suggestions#

Look at what Gmail actually shows: “Available: n12205707”

That is not just a lookup — it is a suggestion. And the trie handles this naturally.

When a username is taken, you walk the prefix tree from the matched node and find sibling paths that are not yet occupied — available leaves adjacent to the taken one. This is how “n12205707” gets generated from “newuser”: the system walks nearby nodes in the trie and surfaces an unoccupied alternative. Still O(k), still in memory.

No separate suggestion service needed. The trie earns its complexity by solving two problems at once.

What Most People Actually Ship#

For completeness, here is the spectrum:

The Elasticsearch approach — prefix query on every keypress with 150ms debounce and some caching. Works at low scale. Becomes expensive and fragile at high signup traffic. The “pray at peak traffic” strategy.

The WebSocket approach — client streams keystrokes, server replies with availability in real time. Stateful, low-latency, fine at moderate scale. But you have now built a hot stateful service just for a UI hint.

The trie approach — in-memory, O(k), local to the pod. Scales with sharding. The UI feels like magic because it is not making a network call at all.

The Consistency Layer: Only the Database Counts#

The trie is a hint system. It improves UX. It does not guarantee uniqueness.

Two users can both type “mikemwita”, both get “available” from the trie, and both click submit simultaneously. You cannot solve that with any local data structure — trie, Bloom filter, or otherwise.

The guarantee comes from one place: a unique constraint in the database, enforced at write time with a distributed lock.

import (

"context"

"database/sql"

"fmt"

"strings"

"time"

"github.com/google/uuid"

"github.com/redis/go-redis/v9"

)

const lockTTL = 5 * time.Second

// releaseLock releases the Redis lock only if we still own it.

var releaseLock = redis.NewScript(`

if redis.call("get", KEYS[1]) == ARGV[1] then

return redis.call("del", KEYS[1])

else

return 0

end

`)

// ReserveUsername atomically claims a username for userID.

// Returns false if taken or if another reservation is in flight.

func ReserveUsername(ctx context.Context, db *sql.DB, rdb *redis.Client, username string, userID int64) (bool, error) {

username = strings.ToLower(username)

lockKey := fmt.Sprintf("lock:username:%s", username)

lockValue := uuid.New().String()

// Acquire distributed lock — only one writer at a time

ok, err := rdb.SetNX(ctx, lockKey, lockValue, lockTTL).Result()

if err != nil {

return false, err

}

if !ok {

return false, nil // another reservation is in flight

}

defer releaseLock.Run(ctx, rdb, []string{lockKey}, lockValue)

// Final check against the DB — the only source of truth

var exists int

err = db.QueryRowContext(ctx,

"SELECT 1 FROM usernames WHERE username = $1", username,

).Scan(&exists)

if err != nil && err != sql.ErrNoRows {

return false, err

}

if err == nil {

return false, nil // already taken

}

_, err = db.ExecContext(ctx,

"INSERT INTO usernames (username, user_id) VALUES ($1, $2)",

username, userID,

)

return err == nil, err

}

The database has a unique index on username. Even without the lock, a concurrent write fails with a constraint violation. The lock makes the failure clean — a clear “taken” response instead of a caught exception. After a successful reservation, a delta event is pushed to the trie update stream so pods stay current.

The Full Picture#

Three layers, three distinct concerns:

| Concern | Mechanism | Latency | Correctness |

|---|---|---|---|

| UX feedback while typing | Sharded in-memory trie | < 1ms | Eventually consistent |

| Alternative suggestions | Same trie, prefix walk | < 1ms | Eventually consistent |

| Uniqueness guarantee | DB + distributed lock | 50–200ms | Strongly consistent |

While you type, the trie responds locally. When it says taken, it walks the tree and surfaces a suggestion from the same data structure. When you submit, the database makes the final call.

The UI feels instant because for the vast majority of keystrokes, it is: a memory read on the local pod, O(k) on the username length, no network, no latency budget to worry about.

Things are just different at this scale. But the ideas are not complicated — just applied with precision.